Quality assurance teams often face challenges when interpreting raw test output from command-line tools like pytest. While pytest efficiently runs automated tests and produces results, the default console output can be difficult to decipher—especially for stakeholders without a development background. This lack of clarity risks slowing down feedback cycles and obscuring important failure details.

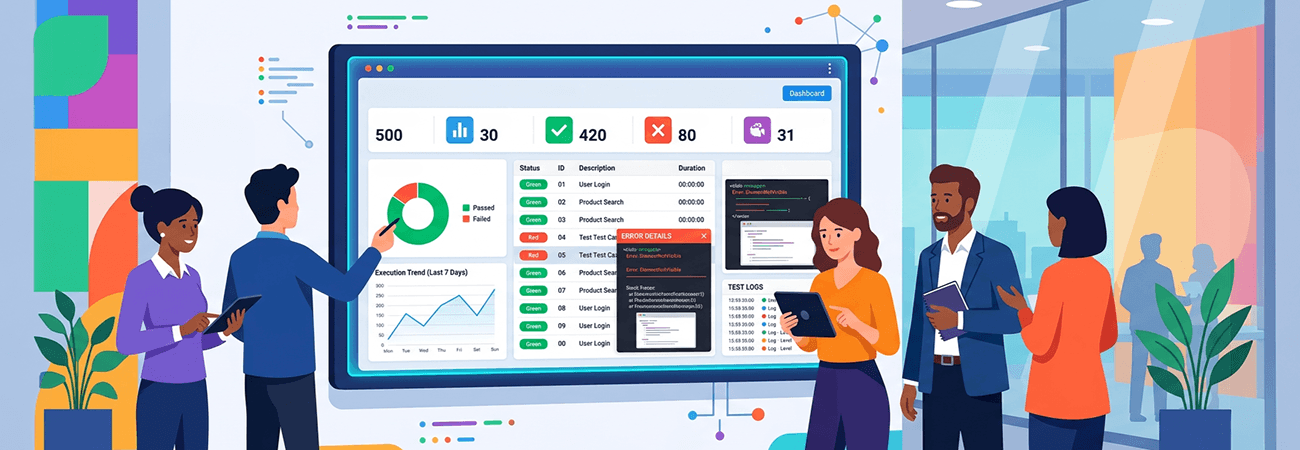

A practical solution is to integrate the pytest-html plugin, which generates comprehensive, easy-to-read HTML reports from test executions. These reports make outcomes visually accessible and include detailed logs, stack traces, execution times, and screenshots when configured.

This approach transforms QA workflows by making test results persistent and sharable across teams. Rather than disappearing after a terminal session, HTML reports provide a lasting record of test executions. This clarity not only speeds up debugging but also empowers non-developers—such as product managers and QA analysts—to interpret results and contribute to decision-making more effectively.

The key takeaway is the importance of enhancing test reporting beyond the command line. Clear, detailed, and persistent reports reduce friction in identifying issues, improve collaboration across roles, and strengthen overall software quality. As automated testing continues to be a cornerstone of modern development, adopting tools like pytest-html helps bridge the gap between technical execution and actionable insights.

For development teams focused on quality and transparency, HTML test reporting offers a streamlined and scalable improvement to traditional testing practices.